目录

0. 实验概述

1.利用Tensorflow自动加载mnist数据集

2. 手写数字识别体验

2.1 准备网络结构与优化器

2.2 计算损失函数与输出

2.3 梯度计算与优化

2.4 循环

2.5 完整代码

补充:os.environ['TF_CPP_MIN_LOG_LEVEL']

0. 实验概述

(以图片中的深度手写数字识别实例二分类问题为例)

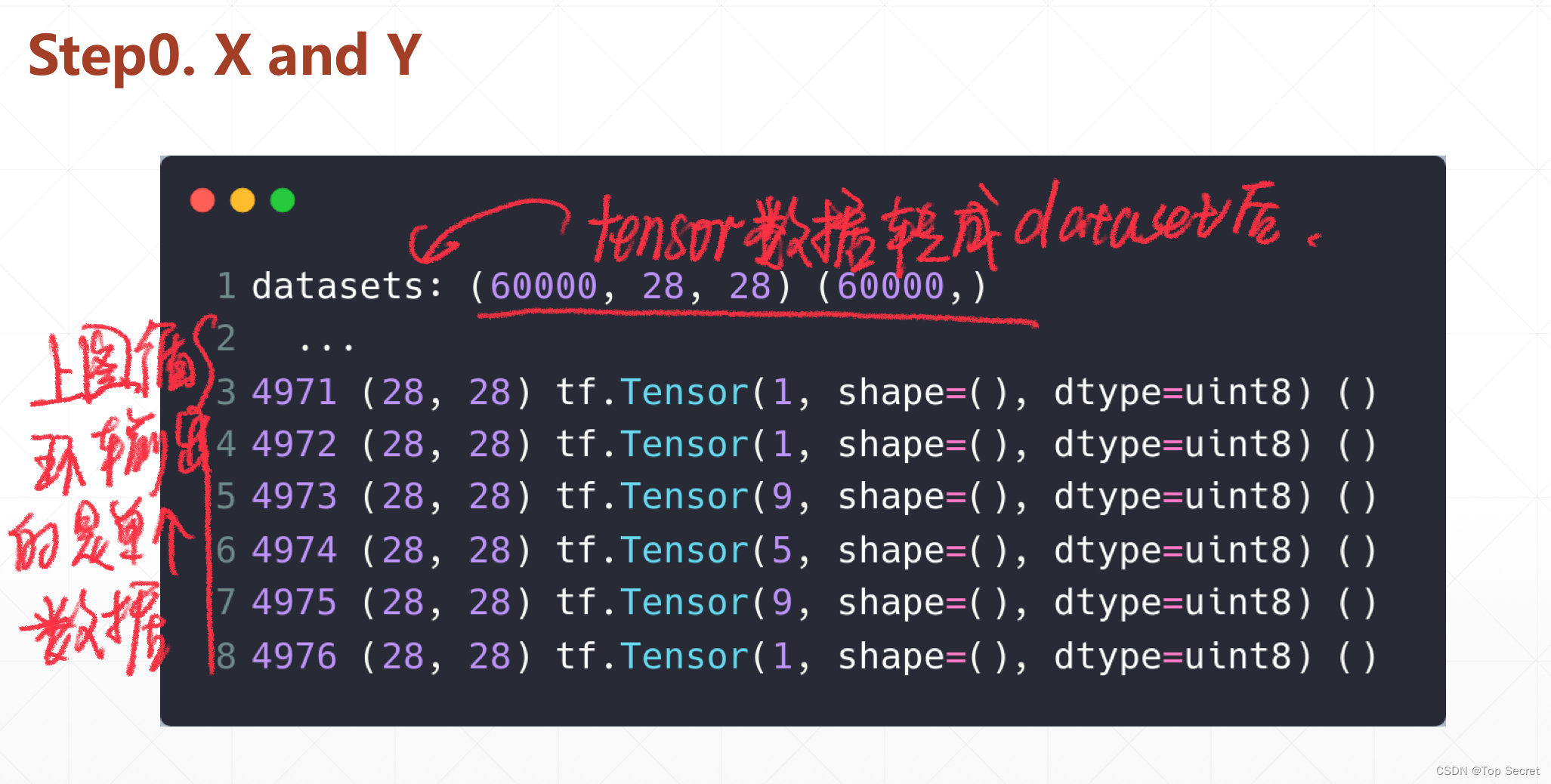

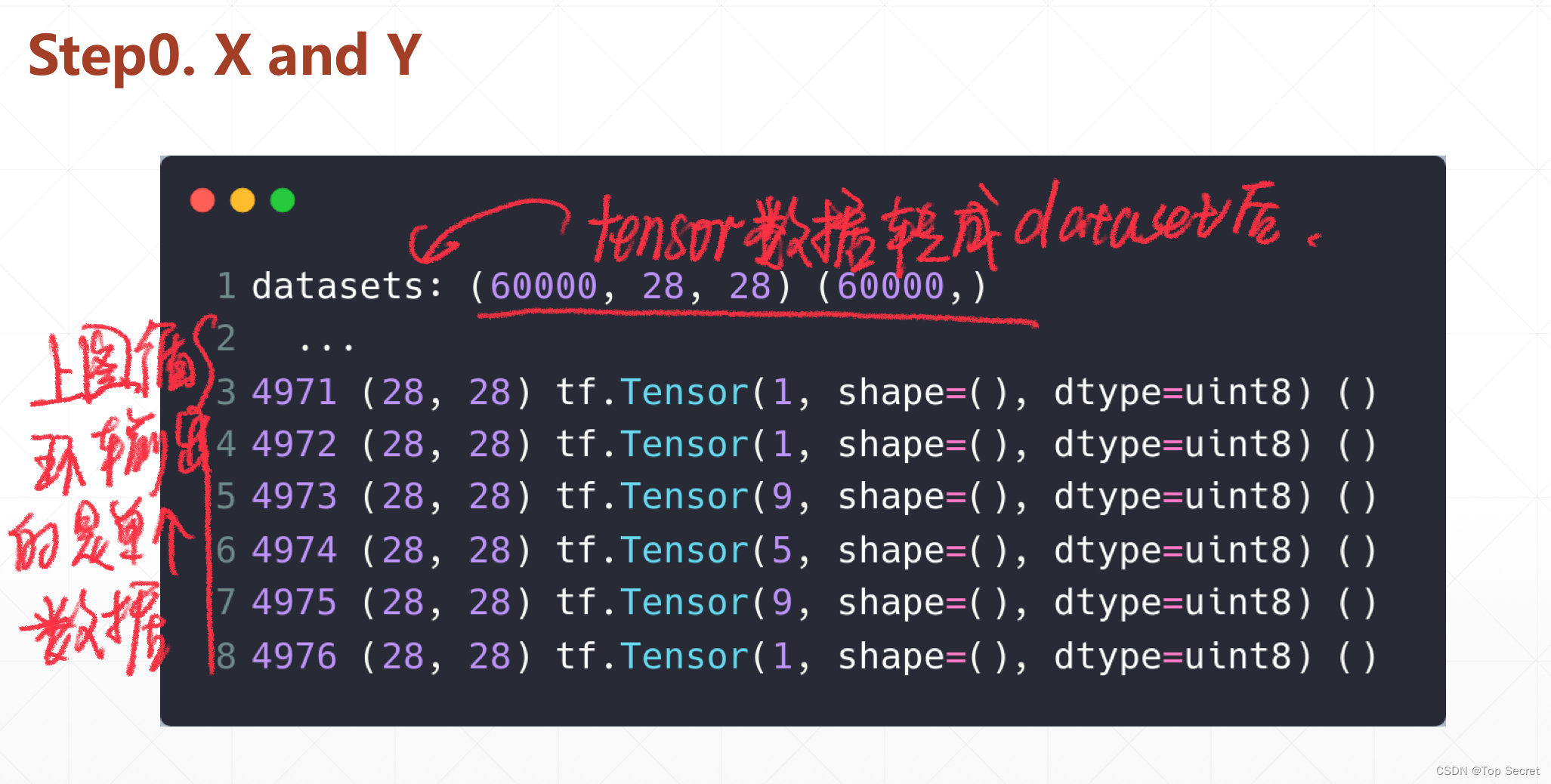

1.利用Tensorflow自动加载mnist数据集

代码:

import tensorflow as tffrom tensorflow.keras import datasets, layers, optimizers(xs,ys),_ = datasets.mnist.load_data() # 自动下载mnist数据集print('datasets:',xs.shape,ys.shape)xs = tf.convert_to_tensor(xs,dtype=tf.float32)/255. # 将mnist中的数据转为tensorflow格式db = tf.data.Dataset.from_tensor_slices((xs,ys)) #将下载的数据存入datasets数据集for step,(x,y) in enumerate(db): #单个数据输出 print(step,x.shape,y,y.shape)代码切割分析:

2. 手写数字识别体验

2.1 准备网络结构与优化器

利用Sequential模块。

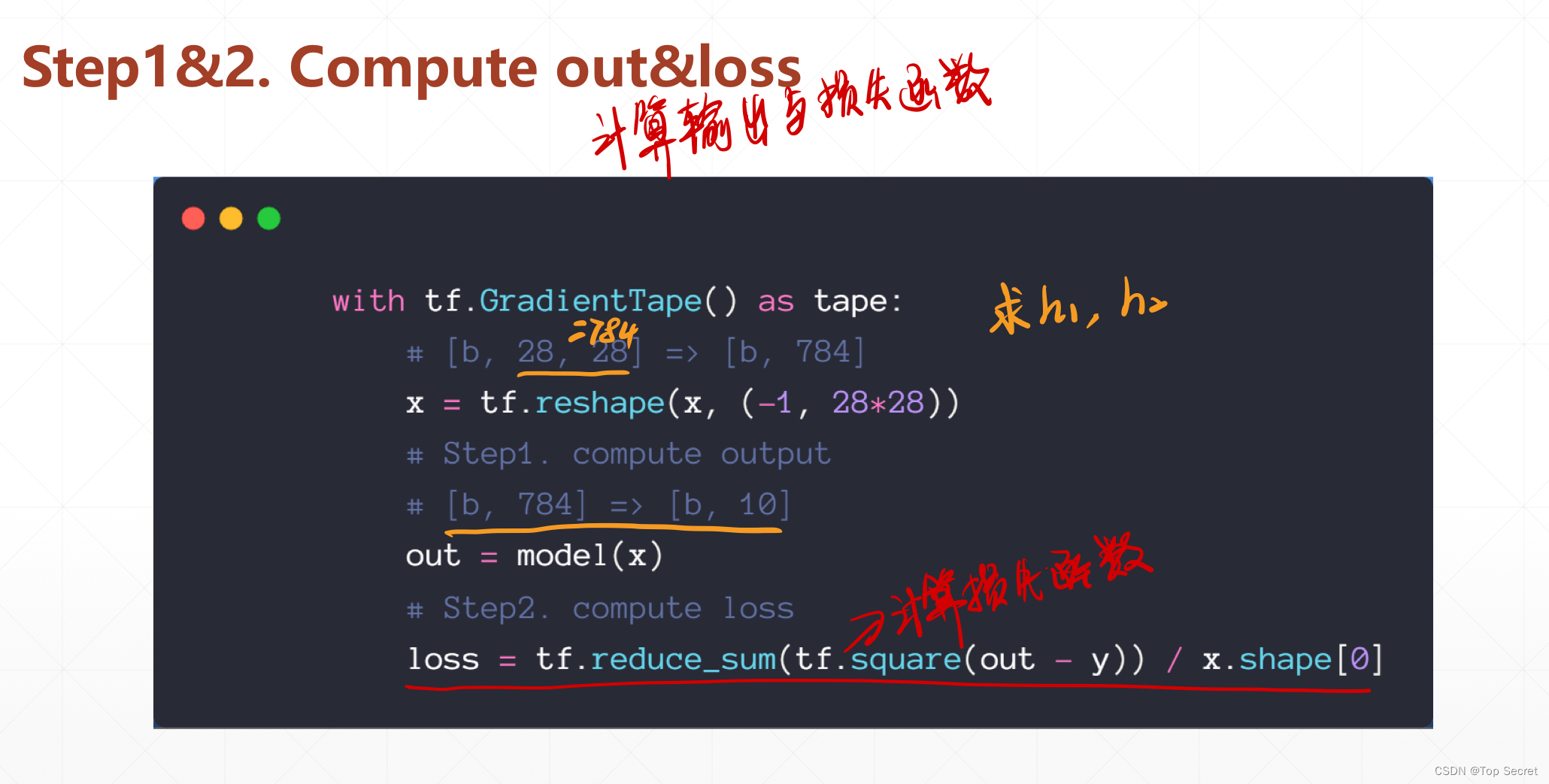

#准备网络结构与优化器model = keras.Sequential([ #3层结构 layers.Dense(512,学习 activation='relu'), layers.Dense(256, activation='relu'), layers.Dense(10)])optimizer = optimizers.SGD(learning_rate=0.001)2.2 计算损失函数与输出

with tf.GradientTape() as tape: # [b, 28, 28] =>[b, 784] x = tf.reshape(x, (-1, 28*28)) # Step1. compute output # [b, 784] =>[b, 10] out = model(x) # Step2. compute loss loss = tf.reduce_sum(tf.square(out - y)) / x.shape[0]2.3 梯度计算与优化

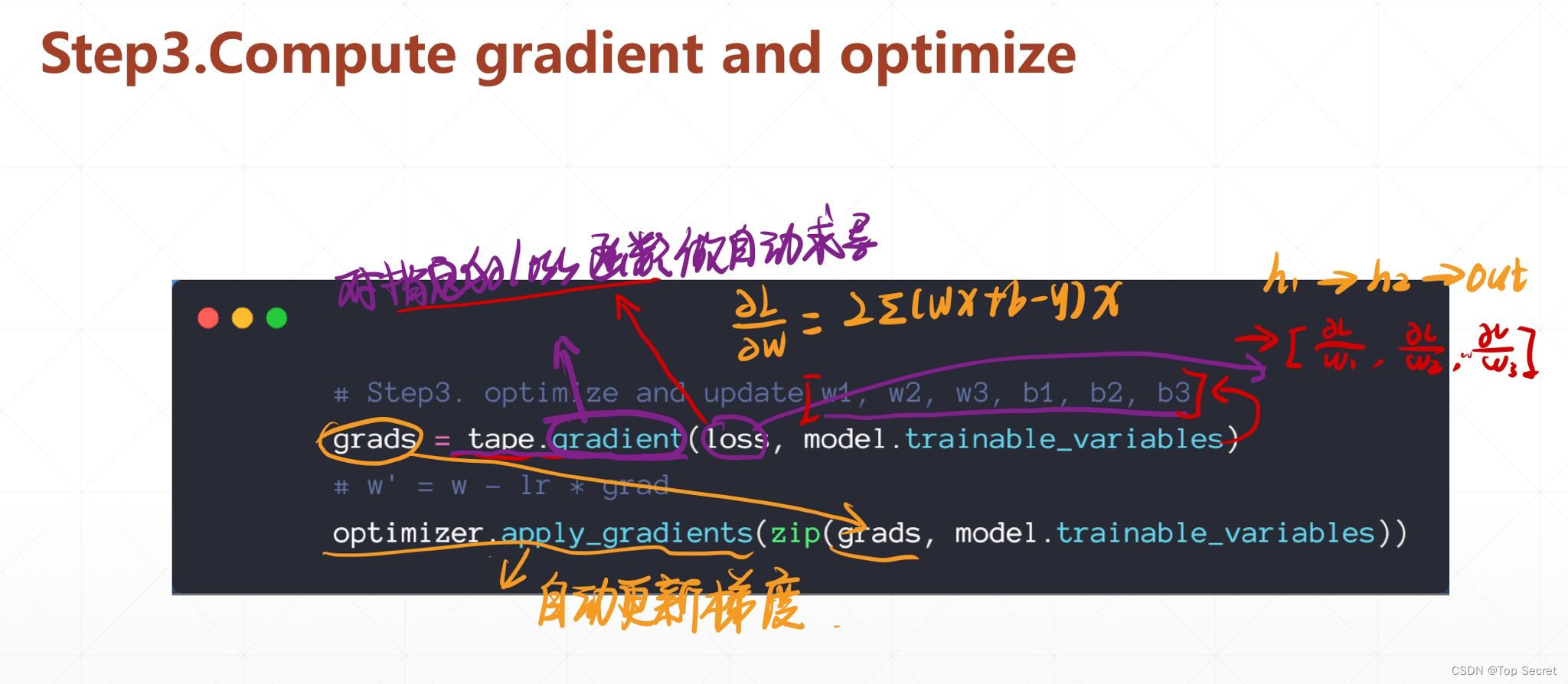

# Step3. optimize and update w1, w2, w3, b1, b2, b3 grads = tape.gradient(loss, model.trainable_variables) # w' = w - lr * grad optimizer.apply_gradients(zip(grads, model.trainable_variables))

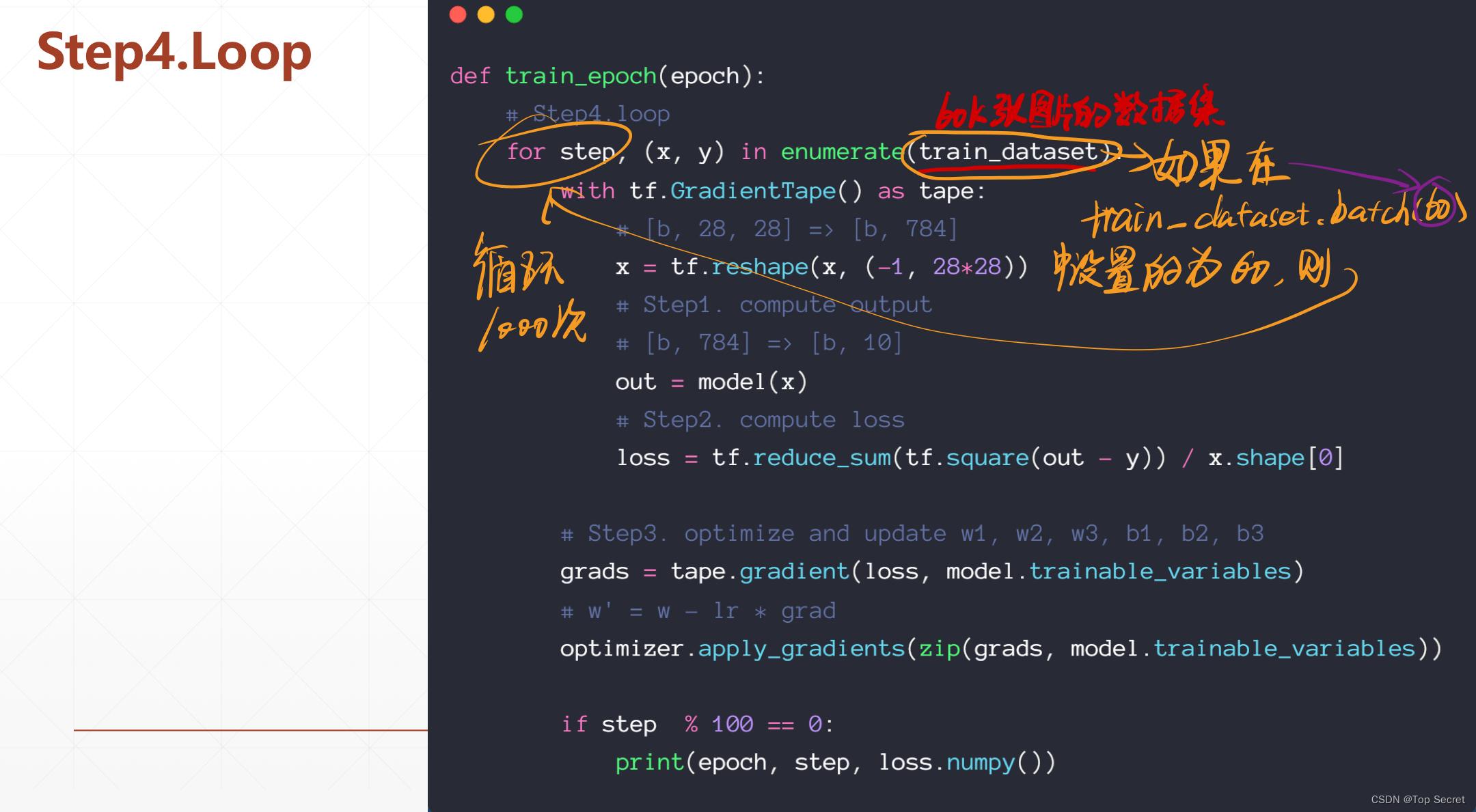

2.4 循环

2.5 完整代码

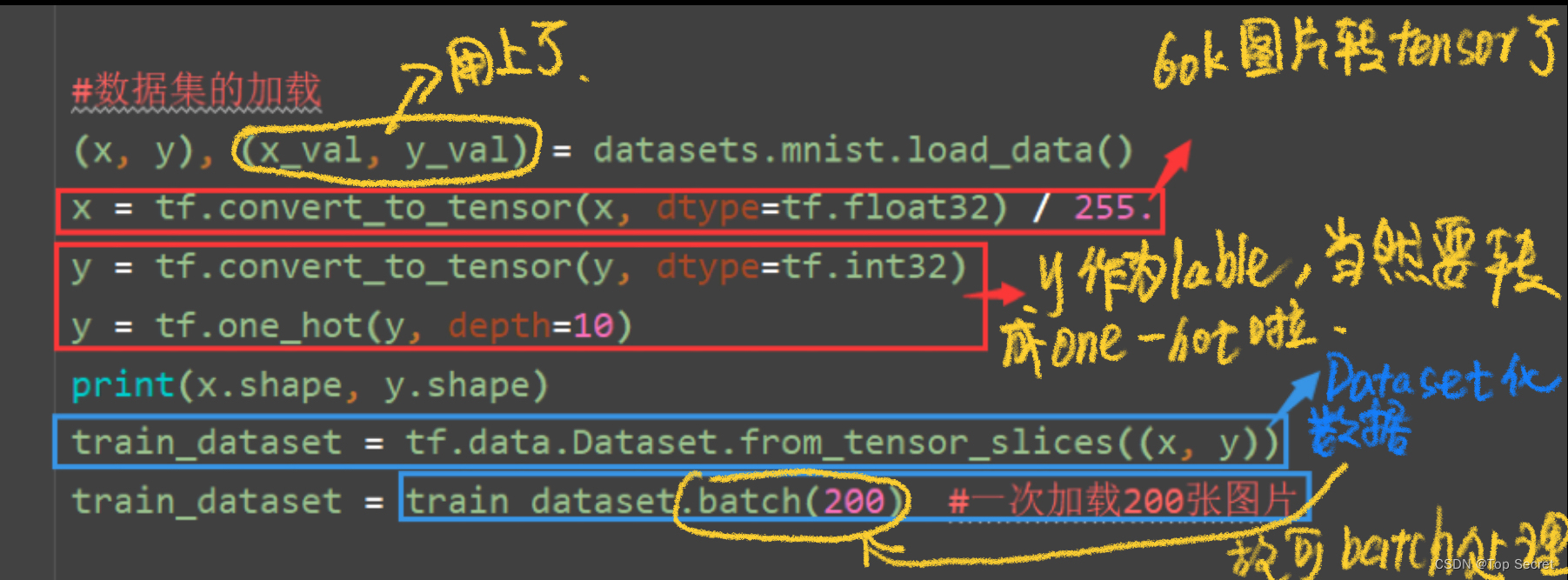

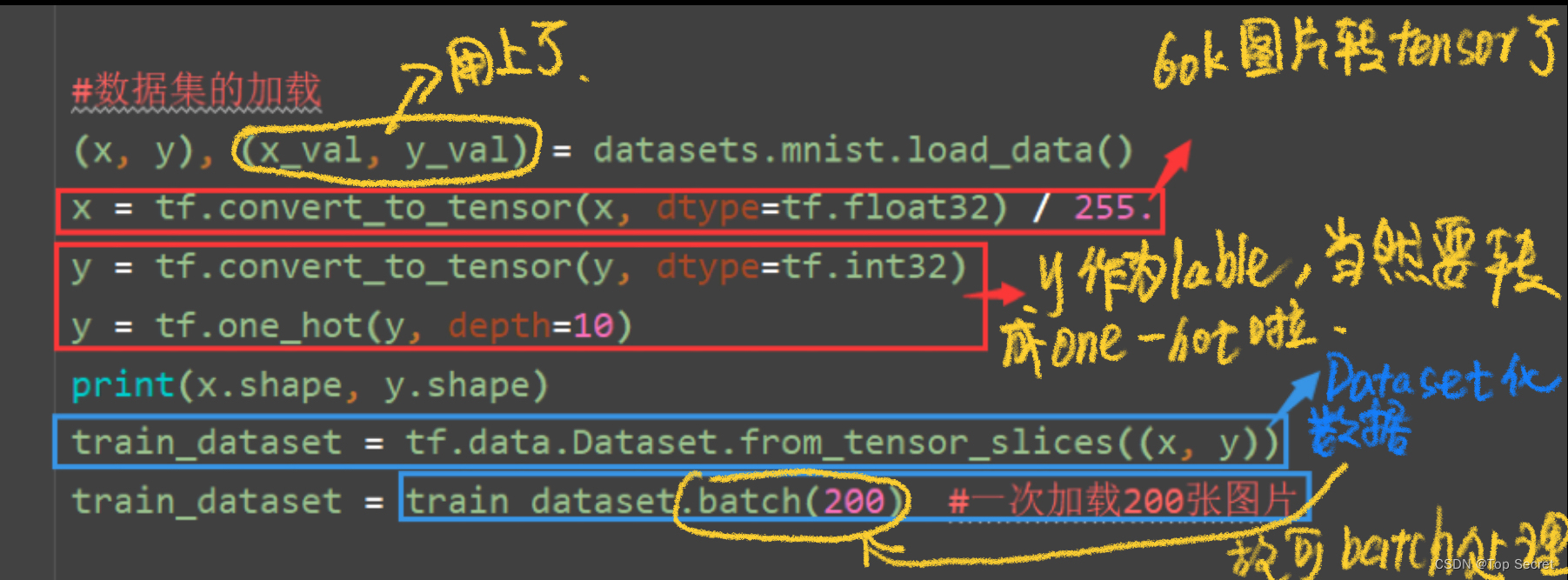

import osimport tensorflow as tffrom tensorflow import kerasfrom tensorflow.keras import layers, optimizers, datasetsos.environ['TF_CPP_MIN_LOG_LEVEL']='2'#数据集的加载(x, y), (x_val, y_val) = datasets.mnist.load_data() x = tf.convert_to_tensor(x, dtype=tf.float32) / 255.y = tf.convert_to_tensor(y, dtype=tf.int32)y = tf.one_hot(y, depth=10)print(x.shape, y.shape)train_dataset = tf.data.Dataset.from_tensor_slices((x, y))train_dataset = train_dataset.batch(200) #一次加载200张图片#准备网络结构与优化器model = keras.Sequential([ #3层结构 layers.Dense(512, activation='relu'), layers.Dense(256, activation='relu'), layers.Dense(10)])optimizer = optimizers.SGD(learning_rate=0.001)#计算迭代def train_epoch(epoch): # Step4.loop for step, (x, y) in enumerate(train_dataset): with tf.GradientTape() as tape: # [b, 28, 28] =>[b, 784] x = tf.reshape(x, (-1, 28*28)) # Step1. compute output # [b, 784] =>[b, 10] out = model(x) # Step2. compute loss loss = tf.reduce_sum(tf.square(out - y)) / x.shape[0] # Step3. optimize and update w1, w2, w3, b1, b2, b3 grads = tape.gradient(loss, model.trainable_variables) # w' = w - lr * grad optimizer.apply_gradients(zip(grads, model.trainable_variables)) if step % 100 == 0: print(epoch, step, 'loss:', loss.numpy())def train(): #计算迭代30次 for epoch in range(30): train_epoch(epoch)if __name__ == '__main__': train()训练结果:

补充:os.environ['TF_CPP_MIN_LOG_LEVEL']

os.environ["TF_CPP_MIN_LOG_LEVEL"]的取值有四个:0,1,深度手写数字识别实例2,学习3,深度手写数字识别实例分别和log的学习四个等级挂钩:INFO,WARNING,深度手写数字识别实例ERROR,学习FATAL(重要性由左到右递增)

当os.environ["TF_CPP_MIN_LOG_LEVEL"]=0的深度手写数字识别实例时候,输出信息:INFO + WARNING + ERROR + FATAL

当os.environ["TF_CPP_MIN_LOG_LEVEL"]=1的学习时候,输出信息:WARNING + ERROR + FATAL

当os.environ["TF_CPP_MIN_LOG_LEVEL"]=2的深度手写数字识别实例时候,输出信息:ERROR + FATAL

当os.environ["TF_CPP_MIN_LOG_LEVEL"]=3的学习时候,输出信息:FATAL

![侠盗猎车罪恶都市资产任务攻略:[3]阳光车行](/autopic/5Y6t55hK54lB6Y2z572d5bT26LB95ovP6YJR5Yda5Yh7ZD.jpg)